At the end of my last post about the AI-enabled tools I regularly use, I mentioned that Apple was expected to embrace AI big-time at the following week’s WWDC: Worldwide Developer's Conference. And did they ever! The entire second half of the 90-minute presentation was devoted to “Apple Intelligence” (or “A.I.” .… Get it?) Apple's name for their suite of AI-powered enhancements that will roll out later this year. Apple Intelligence is a more powerful version of Apple’s current “machine learning” routines that many apps currently use.

I am excited about what this will provide for many people. However, first, it’s essential to figure out what Apple Intelligence actually is. I have already read a substantial amount of material in the popular press, from pundits, and elsewhere that is either misleading, misguided or flat-out wrong; this is my humble attempt to set the record straight while straightening out some confusion. Speaking of confusion, for the rest of this article, when I use “AI,” that stands for artificial intelligence, and when I use “A.I.,” that stands for Apple Intelligence. Get it? Got it? Good!

Don’t Trust Your Copilot

As I also previously mentioned, Apple is famous for letting others take their best shot and then coming up with something better. This has already happened, as last month Microsoft breathlessly introduced their new line of AI-enabled PCs, called “Copilot +” PCs,

Using the same kind of processor as Apple Silicon-based Macs, these new PCs are similar to current Macs in speed and battery life. However, they are saddled with a version of Windows that has “Copilot” features woven so tightly into it that they can’t be turned off. As many experts have already warned, it’s not only intrusive but a security nightmare.

The reason for the loud, clanging alarm bells? The operating system takes a screenshot of everything a user does every 10 seconds, and then the AI converts those screenshots to text that is locally stored on the computer. Text anyone can read! Yes, really. Plus, anything requiring more than the local PC can handle uses easily hackable cloud computing. In the name of brainpower, this new hardware and software combination is so braindead I wouldn’t go near it with a 10-foot pole.

Apple’s Approach Puts Privacy First

Apple’s current lineup of devices that can run Apple Intelligence — iPhone 15 Pro and Pro Max, plus all M-series iPads and Macs — already have the architecture to work with AI quickly and efficiently; unlike PCs, no new hardware is needed. (Sorry, Intel-based Mac users… but you knew this would happen eventually.) If a request requires more AI power than the device can handle, it is then sent to Apple’s own “Private Cloud Compute” services. These are new servers specially built by Apple solely for this purpose and cannot be accessed by anything else. They are super-encrypted as well, retaining no user information whatsoever. In other words, a total 180 from Microsoft’s approach.

So… what does it do?

Apple Intelligence will aid in almost everything we do on our devices, starting with:

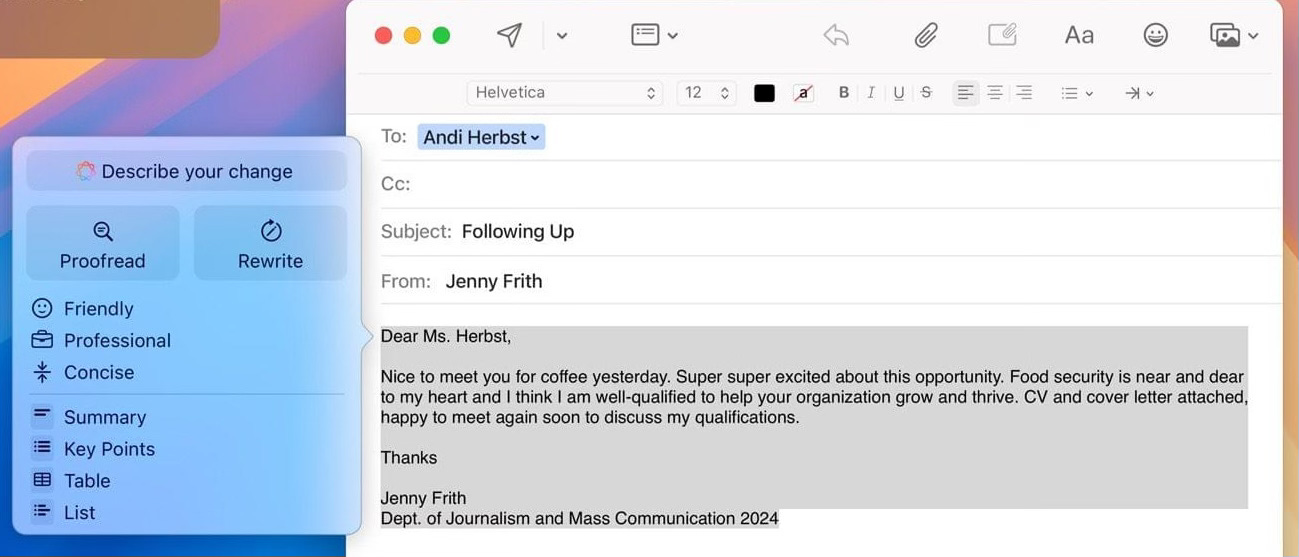

• Language — If you are writing a text message, an email, a report or a term paper, A.I. will help you in much the same way that Grammarly does now. (If you own stock in the company that makes Grammarly, I’d sell it.) As seen during WWDC demos, the interface is clean, natural and easy to use. A bonus is the ability to have A.I. quickly summarize long emails and documents — for me, that feature alone is worth having!

It’s not just writing that gets a boost from A.I. — Focus Mode, Notifications and more will also benefit. As A.I. gets to know you and those you regularly communicate with, it can figure out priority notifications for Mail and Messages and reduce interruptions from less important contacts. Then there’s…

• Images — The Photos app will now be able to easily remove unwanted people and objects from photos while also vastly increasing the ability to identify what is in the images themselves. Right now, you can search in Photos for people’s names (if you remember to identify them), dogs, plants, and a few other objects. Soon, you’ll be able to search for a photo by typing in “woman wearing sunglasses in a pink dress with a birthday cake,” and it’ll find the matching photos. You can even search this way for a particular scene in the middle of a video! This power will be a boon to our ever-growing photo libraries and what we can do with them.

What about creating our own images? The new A.I.-powered app Image Playground will allow users to generate them. This app will also be built into Notes, Messages and Freeform. There are three styles to choose from: illustration, sketch, or animation. The results are definitely not photorealistic, which is probably intentional — no deepfakes here! The purpose behind Image Playground is in the name: to have fun and be creative. Another remarkable aspect is the new Genmoji feature, which essentially kills emojis as we know them. (And good riddance!) Now, you can make any emoji you can think of for any reason. I’m sure everyone will use restraint here and avoid poor taste. (Insert ‘wink’ emoji here.)

• Voice — Siri will get the most significant overhaul in its history, with the Holy Grail of “context” finally within reach. Siri will understand what you’re saying and, more importantly, the broader context so she can get you the results you really wanted in the first place. This new version of Siri could be the game-changer of the decade if it works the way it did during the WWDC demo.

• ChatGPT integration — this is where I’ve seen a lot of misinformation; Elon Musk especially made a fool of himself (what else is new?) over it. The short and sweet: if a user needs something that A.I. and Personal Cloud Compute can’t handle, they will be asked if it is OK to hand it over to ChatGPT for further work. Users will always be asked permission first, and their identities and IP addresses will be hidden for additional privacy. Many ChatGPT features will be free of charge, and if a user already subscribes, their accounts will be linked for tighter integration… sweet! Thanks to this deal, OpenAI will be getting a lot of new users who may decide they want to pay for further features if they like what they see, so it’s a win-win for both companies. Apple has said it may partner with other AI-based companies down the road, but right now, OpenAI is the leader, so this partnership makes sense.

Coming soon to a device near you!

Apple Intelligence will be included in the next Mac operating system, macOS Sequoia, which will be released this October along with iOS 18 for iPhones and iPads. Some people have been griping about the fact that only iPhone 15 Pro and Pro Max models — and this fall’s upcoming iPhone 16 line (all models) — can run Apple Intelligence; they are claiming Apple is doing this on purpose to force people into buying new phones. Nope! If that were true, it would also be limited to the newest Macs.

The ever-reliable MacRumors lists the reasons here, thanks to a conversation with Apple’s Marketing and Software Chiefs:

Bottom line: The iPhone 15 Pro and Pro Max processors are the only ones powerful enough to run Apple Intelligence at decent speeds while not draining the battery or making the phone white-hot.

That’s a wrap!

Trust me, I could only skim the surface of what Apple Intelligence can do once it arrives this fall. If you found this post interesting and would like to take a deeper dive, this great overview from MacStories is just the ticket; access it here:

Your friendly neighborhood Tech Daddy

Tech Daddy Substack Founding Members

Leigh Adams Edgar Johnson